Wondering if your business needs an AI policy?

AI technologies are revolutionising business with automation, insight, and decision-making abilities. And they present boundless opportunities for those who are equipped to use their potential.

However, new technology always carries new risks. Some are known – many are unknown. Notably, AI tools are primarily internet-based technologies. This brings some pretty serious privacy and data security implications.

Especially when 11% of the data employees paste into ChatGPT is considered sensitive information.

While generative AI can access and process large amounts of data in seconds, its online nature can potentially expose sensitive data and critical systems to cyber threats. Misuse, whether intentional or accidental, can lead to serious consequences.

With these opportunities, and despite the risks, it’s no longer a question of whether your business should use AI. It’s likely that many people in your business already are. A 2023 report showed that 68% of employees using generative AI did not tell their bosses.

That’s why we’re more concerned with your business doing so responsibly and securely. This is why you might want to consider creating an AI policy for your business.

Key Takeaways

-

- AI offers significant opportunities to improve your business through automation and data insights, but it also comes with risks, particularly around privacy and data security.

- By following ISO 42001 standards, your business can implement AI responsibly, focusing on ethics, transparency, and accountability in its AI systems.

- It’s important to identify how AI fits into your business operations, whether in customer service, marketing, or data analysis, while also being aware of risks like biases and cybersecurity vulnerabilities.

- Your AI policy should be tailored to your business’s specific goals and use cases, ensuring it covers areas where AI can add value, like streamlining data-heavy or repetitive tasks.

- To stay effective, your AI policy needs regular reviews and updates to keep up with rapid technological advancements and evolving regulations, ensuring compliance over time.

- Success depends on making sure everyone in your business understands and follows the AI policy, which requires proper training, stakeholder engagement, and continuous feedback.

Adhering to ISO 42001 Standards

If you decide to create your own AI policy, we recommend doing so in adherence with ISO 42001 Annex A Control A.2. This is a supplementary section of ISO 42001.

ISO 42001 is an international standard that provides guidelines for establishing, implementing, maintaining, and continually improving an AI Management System (AIMS). It addresses key areas such as ethics, transparency, risk management, and accountability, ensuring that AI systems are used responsibly and effectively.

You might be wondering why you should adhere to a standard like ISO 42001.

Well, it’s a benchmark that signals that your business is taking AI governance seriously.

Get Your Free Essential Eight Cyber Security Report

Learn:

-

- The cyber security gaps costing you time and money.

- Practical steps to upgrade your security measures.

- The hidden risks of poor security protocols.

- How to bolster your cyber security and aid business growth.

What Role Does AI Have In Your Business?

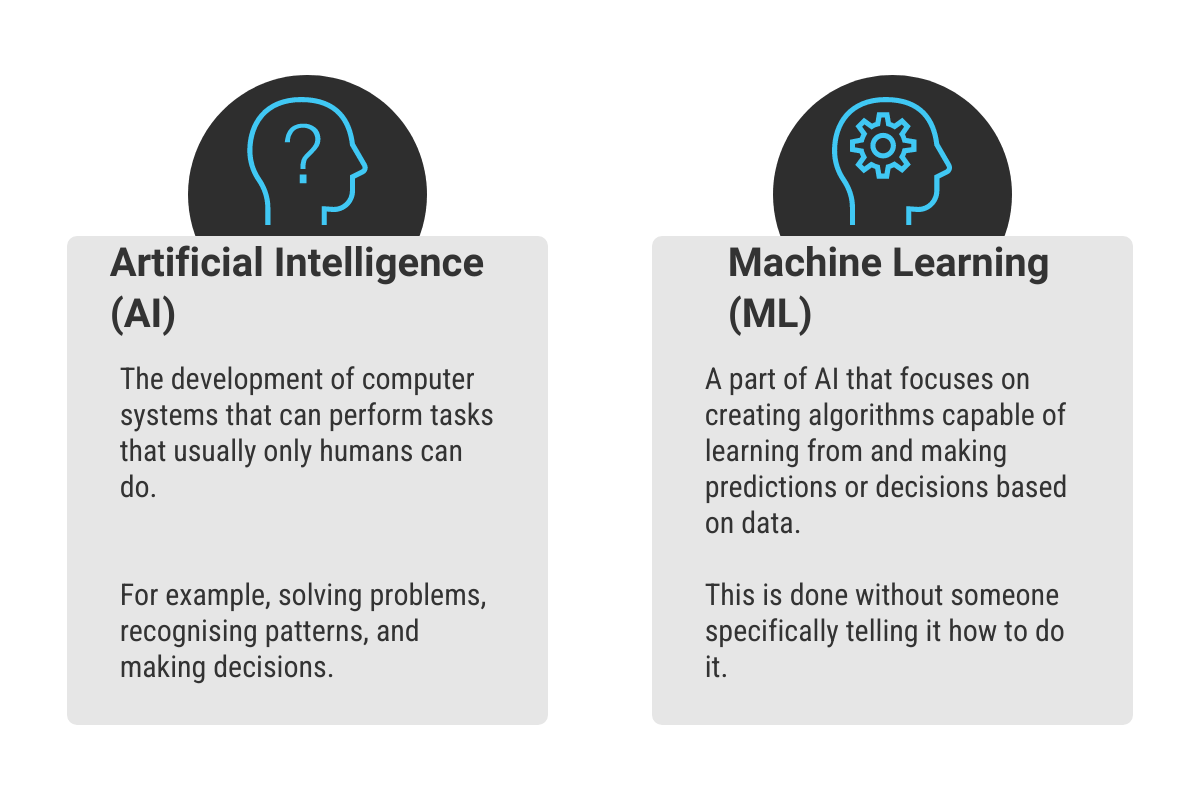

Defined broadly, AI is the simulation of human intelligence processes by machines, computer systems in particular. It entails:

- Learning: gathering information and rules for using it

- Reasoning: applying rules to reach defined or approximate conclusions

- Self Correction: deciding how to respond without human interaction

Machine Learning (ML) is a subset of AI. It involves using algorithms to examine data, learn from it, and then make a prediction about something in the world.

So how can you use AI in your business? Well, there are a number of applications. You could use it for customer service with chatbots, product recommendations in marketing, streamlining supply chains, or even make a predictive financial analysis.

There are a lot of AI tools around at the moment. Some, properly utilised, can increase efficiency, reduce costs, and give you that competitive edge.

AI development has reached a point where we’re likely to consistently see game changing technology released over the coming years.

But…

Before we get carried away, there are risks. For instance, because AI systems rely heavily on data, they can amplify existing biases present in that data, leading to a potential impact on outcomes.

These systems are also at risk from cyber threats. After all, they are internet based. Remember, true privacy with any internet-based system is rare, if not non-existent.

Therefore, you want to identify areas within your business where AI and ML are beneficial, while also considering the risks. These areas might include data-heavy tasks or repetitive processes, tasks requiring deep insights from data analysis, or customer facing tasks that could benefit from personalisation.

Identifying these areas will provide a foundation for AI usage policy and ensure the practical and beneficial use of AI in your business.

Considerations for an AI Company Policy

Creating an AI policy involves more than just understanding the technology and its potential. It’s about creating a framework that outlines the operational boundaries and safeguards necessary when deploying these technologies. Here are some key requirements to consider:

1. Appropriateness

You want to make sure the AI policy is appropriate for your organisation’s purpose and context. Tailor the policy to fit the specific operational realities and strategic goals of your business.

For instance, if your business only uses AI to assist with report writing, your policy will not need to cover issues relating to the creation of AI itself.

Determine what context your organisation intends to use AI as well as desired use cases in the future.

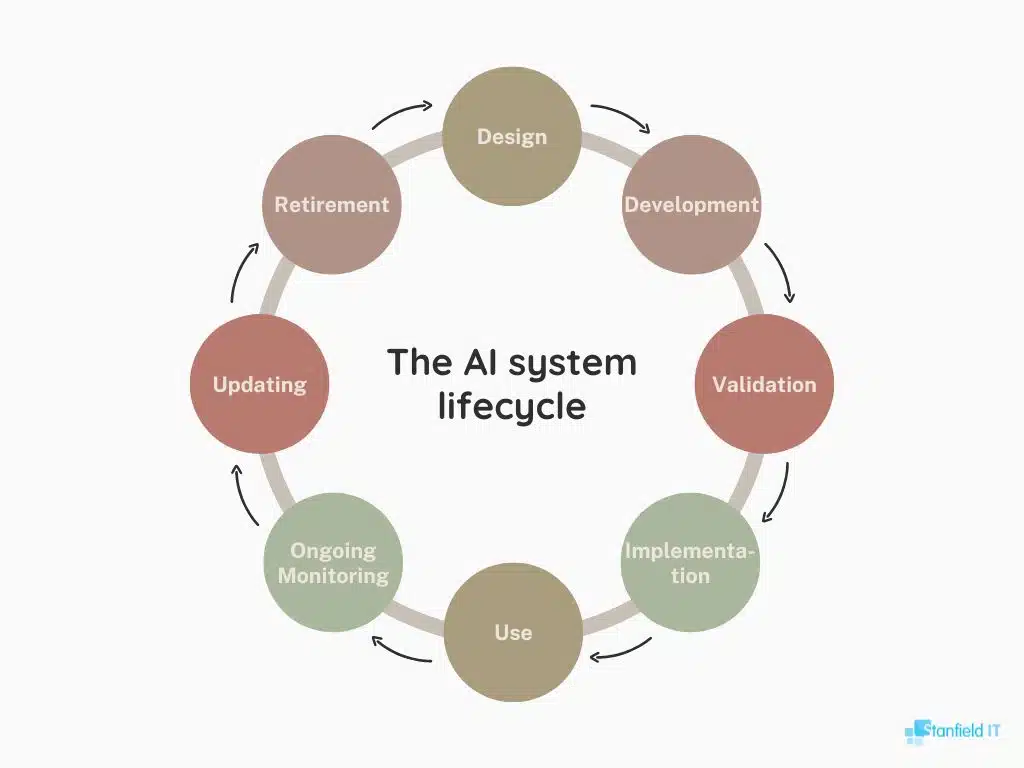

This way, you can identify the specific stages of the AI lifecycle relevant to your policy development.

2. Framework for Objectives

Provide a clear framework for setting AI objectives. This framework helps align AI initiatives with the overall business strategy, ensuring that AI efforts contribute meaningfully to organisational goals.

3. Commitment to Applicable Requirements

Include a commitment to meet all relevant legal, regulatory, and contractual obligations related to AI systems.

This ensures that AI usage adheres to necessary standards and avoids legal and ethical issues.

4. Commitment to Continual Improvement

Commit to the continual improvement of the AI management system (AIMS). Regularly assess and enhance the policy to keep pace with technological advancements and evolving regulations.

5. Documentation and Accessibility

Document the AI policy and ensure it is communicated effectively throughout your organisation.

Make the policy accessible to relevant stakeholders to foster understanding and compliance.

6. Alignment with Other Policies

Align the AI policy with other organisational policies.

Consider how AI impacts various domains such as privacy, security, and quality, and ensure cohesive integration for comprehensive governance.

Steps to Creating an AI Policy

Creating an AI policy requires planning, an understanding of the technology’s impact, and engaging with stakeholders. Here’s a step-by-step guide on how to create an effective AI policy for your business.

Set a Clear Purpose and Scope for your AI Policy

Define clear goals and objectives for your AI policy.

Describe what the policy will cover. This could include promoting responsible AI use, ensuring compliance with relevant regulations, protecting data privacy, and mitigating AI-related risks. Align these goals with your company’s overall strategy and specific AI usage plans.

Identify the Right Stakeholders

This will establish the roles and responsibilities for AI oversight within your organisation.

Your AI policy will impact many aspects of your business. Again, you might use it for data handling, IT security, HR, and customer relations.

Therefore, you need to involve people from all across your organisation. If your business is larger, this may include:

- IT experts

- Data privacy officers

- Legal advisors

- HR professionals

- Business unit leaders

If you don’t have someone in a designated role in each sector, you’ll need to carefully consider how they might be impacted.

In short, engage with a diverse group of stakeholders and ensure different perspectives are taken into account to help foster company wide buy in.

Outlining Essential Components of the AI Policy

Now, outline the essential components of your AI policy. This should include:

Data Privacy and Security Measures: Clearly define how AI systems should handle and protect data. This might involve protocols for data access, data sharing, encryption standards, and procedures for responding to data breaches.

Compliance with Regulations: Your policy should ensure that all AI usage complies with applicable laws and regulations. This includes data protection laws, industry-specific regulations, and any standards related to AI and ML technologies.

Ethical Principles: You may also want to incorporate ethical considerations in your policy. Specify acceptable and unacceptable uses of AI, and address issues such as algorithmic bias, transparency, and accountability. Make a commitment to fairness, transparency, and accountability.

Review and Update Mechanisms: Finally, your policy should include a system for regularly reviewing and updating the policy as AI systems and related legal and societal norms evolve.

Don't miss out on our latest.

Join our subscribers and receive expert insights on cyber security and IT. Sign up now!

Implementing and Monitoring Your AI Policy

After creating your policy you need to ensure it’s implemented and monitored.

Training and Awareness

The success of your policy hinges on awareness and understanding across your organisation. To help with this, consider running a training program. Employees should understand the purpose and goals of the AI policy and their roles in adhering to it. This might involve workshops, online courses, or a one-on-one session depending on your organisation’s size and structure.

Regular Audits and Policy Updates

AI and ML technology are evolving rapidly. Unfortunately, this means your policy should too. Regular audits and updates will help you ensure your policy remains up-to-date with advancements. This might include annual or bi-annual reviews of the policy, with adjustments made where necessary.

Also, consider engaging external auditors to provide an objective evaluation of your AI policy and its effectiveness. External audits help identify blind spots and offer valuable insights on industry standards and best practices.

Ongoing Monitoring and Evaluation

Beyond regular audits, ongoing monitoring will ensure your compliance with the AI policy and assess its effectiveness. Monitoring mechanisms will range from regular check-ins with team members to more formal evaluation processes.

Encourage feedback from all stakeholders to gain a holistic view of the AI policy’s performance.

Essential Guidelines for Your AI Policy

To wrap up, here’s a list of must-have components that your AI policy should cover.

Determine the Users of AI Tools

You’ll need to decide who in your business can use these tools. Is it the entire team, a particular department, or only specific roles? Base this decision on the nature of the AI applications and the relevance to the team’s tasks.

Outline Responsible Use Guidelines

Your AI policy should outline best practices for responsible use. This can include guidelines on frequency of use, the types of tasks they can be used for, and protocols to follow if the tools don’t work as expected.

Restrict Data Input

We’ll make this simple, never input customer or any other sensitive business data.

Protection of Intellectual Property

Similar to customer data, the AI policy should strictly prohibit the input of any form of intellectual property into AI tools. This will help prevent unauthorized access, misuse, or theft of your company’s proprietary information.

Leadership and Compliance

Leaders in your business need to set a positive example for AI usage. Leaders need to not only comply with the policy but also actively promote its adherence across their teams.

Conclusion

There are plenty of benefits to incorporating AI in your business operations. However, you need to govern its use responsibly. By creating a thorough and well thought out AI policy, you can ensure a balance between leveraging AI capabilities and protecting your data, customer information, and intellectual property.

More Like This

Cyber Security Strategy: A Comprehensive Framework

Having a well thought out and correctly implemented Cyber Security Strategy can help businesses avoid a huge amount of damage if they find themselves under attack. Let’s explore the importance of cyber security and how to avoid the sort of security failure that can destroy a company.

Windows 10 End of Life (EOL) and What it Means

Microsoft has announced that October 14, 2025, will be the End of Life (EOL) date for Windows 10. Windows 10 EOL is set to affect all versions of Windows 10, including Home, Pro, Education, and Enterprise. Research from Lansweeper indicates that only 23.1% of...

ISO 27001 Certification in Australia: A Comprehensive Guide

Business data is like a treasure for cyber criminals. In fact, buried in almost every organisation's information assets are details worthy of holding ransom. So, how do you protect it? With ISO 27001 certification. ISO 270001 is more than just a standard industry...